Abstract

Currently, winners of hackathons, as well as high tech prize competitions (together, “Competitions”), are determined by a group of judges who select the winning team. Such judges may be invited by the organizers of hackathons and bounties based on their expertise, technical proficiency and/or relationships. Judges typically range from investors and sponsoring companies to technologists. However, this centralized system of i) judge selection and ii) judge discernment are problematic. First, judges may not possess sufficient knowledge of the theme or the subject matter of the Competition. In addition, non-technical judges may ask the wrong questions or they may not understand the intricacy of how code interplays with the business model. Furthermore, judges may easily be subjective in their discernment, even though they are often asked to judge teams based on creativity, innovation, purpose, quality of the code, the theme of the hackathon and business model among other criteria.

As a distributed ledger and without a central intermediary, blockchain allows for a consensus mechanism that disrupts the selection of judges, because better governance for judges would result in an outcome that takes into consideration quality, inclusion and fairness. Consensus mechanism generally validates the next block in the chain and prevents adversaries from attacking and forking the blockchain. Consensus models typically include Proof of Work, Proof of Stake, Delegated Proof of Stake and Practical Byzantine Fault Tolerance (PBFT). This paper outlines a consensus mechanism for the Kambria Network that implements a more reliable and better governance for judges.

In addition, this paper set forth terms, mathematics, probability and game theory that help to assure that judges adhere to a set of predefined rules. By taking into consideration potential conflict of interests, inherent bias and subjectivity and possible malevolent intent, the protocol contains two prongs that would keep it robust and help maintain a fault tolerant of ½ through our consensus model. The consensus will fell in case of fault tolerant greater than ½. However, it is possible to keep this parameter less than the threshold of ½ by a set of predefined rules. Thereafter, the consensus will be reliable and entirely able to implement in practice.

Introduction

Hackathons are often designed to create functional software or hardware to solve a particular problem by the end of the event. Software developers and other subject matter experts form teams and collaborate intensively at the beginning of the morning throughout the day. At the end of hackathons, each team would demonstrate their solution and present their results. A panel of judges would select the winning teams for the prize. For example, Salesforce had run a hackathon with a prize of $1 million to the winners and TechCrunch had offered a $250,000 prize for a social gaming hackathon. Such hackathons leverage the collective technical minds of the community to solve a problem utilizing a new field of technology, to develop new software technologies within a short amount of time, and/or to locate innovation for funding and further development.

In the same spirit as hackathons, many organizations run public competitions to tap the power of the tech collective, looking for solutions from everything from AI to spacecraft. For example, NASA sponsored its Unmanned Aircraft Systems Airspace Operations Challenge that focuses on a drone’s ability to sense and avoid air traffic. During the Google Lunar XPRIZE, a team must place a robot on the moon’s surface successfully that would explore at least 500 meters of the moon and transmit images and video back to Earth. Likewise, the Brain Preservation Foundation had announced a cash prize for the first individual to preserve an entire human brain for over 100 years such that every neuronal process and synaptic connection must remain traceable and intact using electron microscopic technologies.

A critical component of hackathons and public competitions is the role of judges. At many hackathons, the judges are composed of organizers and sponsors. Similarly, judges for public competitions also comprise of organizers and sponsors. Their task is to evaluate each team and their solutions prior to selecting and determining a winning team for the prize. Ideally, criteria for each team includes the business value and technical complexity of their solution, their relative wow factor, user experience and design, whether their solution is functional, and whether they are innovative. Such criteria serves as a guideline for judges especially those who have never been a judge nor been to an event to know what to expect. Despite a list of criteria such as usefulness, originality, impact and complexity however, the rating by the judges may not be fair.

The biggest challenge with hackathons and public competitions lie the governance for judges and the judging process. For instance, are hackathons merely a way for organizations and their sponsors to crowdsource ideas from the tech community without compensation? Are organizations running a public competition to harvest intellectual property from the crowd with no intent whatsoever to select a winner? Is there an inherent conflict of interest if the organizers of hackathons or public competitions also serve as judges? Would the judges award the prize to a polished functional solution even though it seemed to be obviously created prior to the hackathon?

To solve the risk of subjectivity and malevolent intent of the judges, the Innovation Judge Protocol proposes a new consensus that resolves the existing limitations of governance.

Click here to read the full Kambria Innovation Judge Protocol.

The Kambria Team

Telegram (ENG) Telegram (KOR) Telegram (VIE)

Email: info@kambria.io

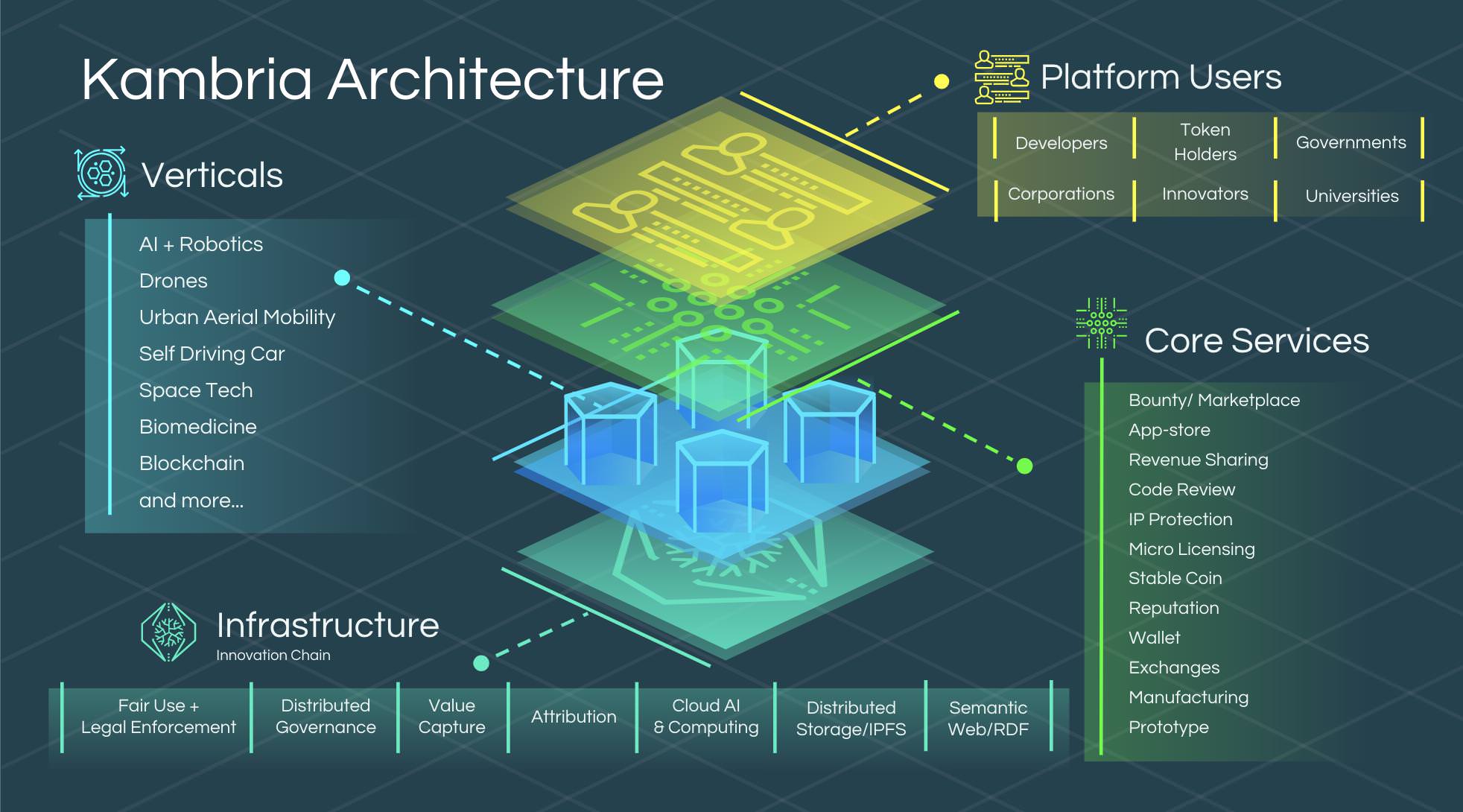

KAT is a token used on the Kambria platform.