✨ Why is image tagging important?

You've heard us say it before: data is the new oil. But what does that mean? Simply put, in this digital age, data has become perhaps the most valuable asset on the planet. But data in of itself is only truly usable after it has gone through the process of being "labeled."

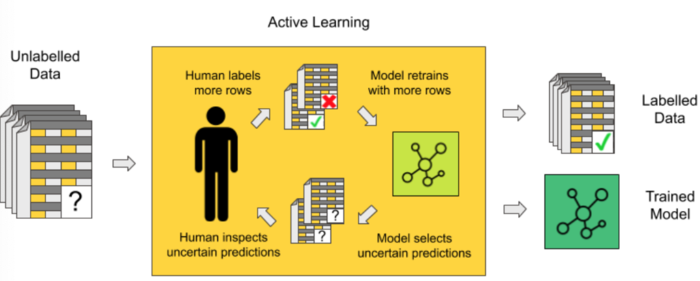

Data labeling, also known as data annotation, is the process of applying identifying information to datasets so that machine learning has an easier time working with and manipulating it through supervised learning. Image tagging is the subset of data labeling where images themselves are labeled according to their content or characteristics. Labeled data is a group of datasets that have been tagged with one or more labels to identify its properties, classifications, and/or the objects within. When consumed by the machine learning training model, as long as it’s accurate, it will then allow machine learning to predict similar characteristics in unlabeled data to predict outcomes. This is referred to as unsupervised learning.

Data annotation teaches programs what it needs to learn and how to discriminate between various inputs to come up with accurate outputs. Because of data and image labeling, companies are able to train, validate, and fine-tune their models for maximum accuracy and efficiency. The goal is to teach the machines to see what we see. What do you see in a photograph? A dog? A tree? Or is it a highly complex photograph with a number of objects, people, and activities?

The more complex a scene is, the more difficult it is for the machines to accurately understand the relationship between each object and its surroundings. The first step to training these machine learning algorithms is to have an immense amount of properly labeled data. That is where teams of people, in this case, our community, come in to properly label the data.

How will the data from this bounty be used?

The purpose of the OhmniLabs Image Tagging Bounty is to involve our dedicated community in creating a comprehensive dataset that can be used to improve the way Ohmni robots interact with their environment. Because many of their customers lack the mobility and health to handle, in some cases, some of the most simple tasks, they are pushing the boundaries of innovation and working towards developing the next generation of robotic technology. Through the improvement of their semantic perception engine, the robots will gain the ability to further understand and make sense of its environment, giving Ohmni the ability to manipulate and interact with its surroundings.

Through machine learning, OhmniLabs aims to use labeled images to train Ohmni to identify its surroundings so Ohmni can perform such tasks as open a door, grab an object, navigate autonomously, identify a crisis (like when a person needs medical attention), and a wide variety of other additional features. This is a step towards building advanced robots that can tackle these important tasks and improve the value proposition of affordable robotics as a whole.

The first challenge will focus on one simple, but important object: doorknobs. This could be used to “open the door” to train Ohmni and allow it to operate more autonomously in various settings, like when caring for people with disabilities, office spaces, and hospitals.

Learn more about the OhmniLabs Image Labeling Bounty

To learn more about this challenge, we invite you to visit our website where you can sign up to take part in the OIL Challenge. Registration is open now, so be sure to sign up! For more information, please visit https://blog.kambria.io/ohmnilabs-image-labeling-bounty-announced/

💸Earn Rewards

As a participant, you can earn rewards for accurately labeling images. All of the tasks in this bounty are easy to complete and we do not require that you have technical knowledge of machine learning to participate. Join us!

📖Register Today!

Space is limited so register today at: http://bit.ly/OIL-Bounty-Challenge

Registration is now open!

📅 Open for Registration: 07:30 PM UTC+7, March 13th, 2020 - 11:59 PM UTC+7, March 27th, 2020

📅 Labeling Period: 00:00 AM UTC+7, March 28th, 2020 - 11:59 PM UTC+7, April 12th, 2020